I had a jarring experience recently - that kicked off the insights you’re about to read about.

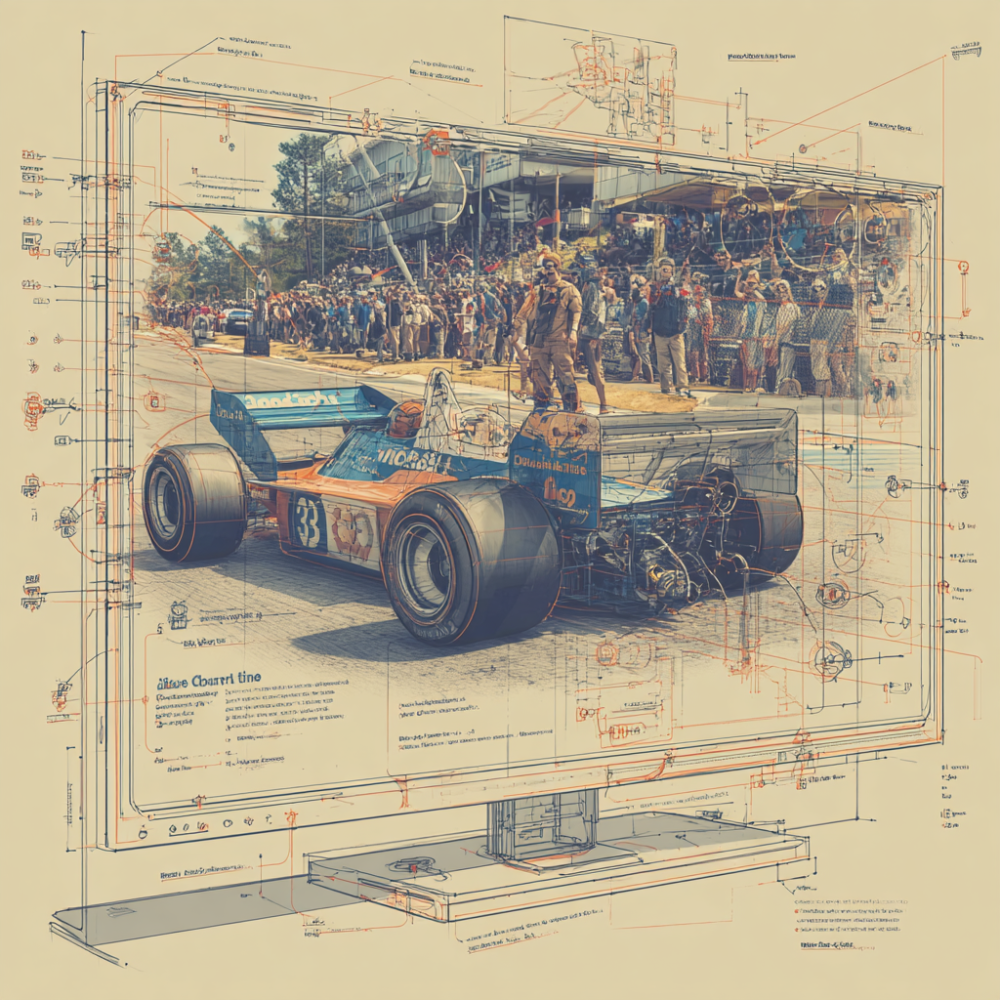

I’m sitting at home, about to tune into the F1 race in Brasil.

I turn on my TV (it’s a Roku powered TV). Then I turn on my “cable box” - it’s a Google TV box (love it!). I navigate to the guide to get to the right channel (it was one of the ESPN channels). And pull up the race.

As the picture comes on screen, I’m confronted with an unusual black bar at the lower 25% of the screen (that I desperately wish I’d captured a photo of):

“See Other Viewing Options” - and a purple star button, indicating what to press.

Wait, WTF… did someone hack my TV?

I wasn’t actually watching TV on the Roku. Not a Roku channel, not even using the Roku OS in any way.

It was a streaming TV box, connected to an HDMI port, and even my audio was coming out of the soundbar.

Literally, there was nothing that Roku OS should have been doing in my experience.

Yet, here was Roku OS “watching” what I was doing. It was clearly processing my experience. And then it was intruding it.

The why?

Well that’s obvious - Roku was trying to steer me away from an experience that they had no involvement in - to one that they could “control”. I’d say that was one where they could collect data - but clearly that’s a fallacy, as it was evidenced that they were already collecting data on me when they shouldn’t have been.

Clearly Roku wanted to “own my eyes” - a vehicle to their own growth and monetization.

At first I was pissed - let me be clear the next TV will not be a Roku one.

Then I thought - well as a product feature to drive growth it’s kinda brilliant.

And finally I considered the reality of where we are - we’re in a war for eyeballs, like never before, and it’s getting messy.

Why the eyeball?

Let’s start with the obvious shall, we. Why would you want someone’s attention?

Of course monetization!

As the saying goes, “if you’re not paying for the product, then you’re the product!”

And in this context perhaps we should amend that to, “then showing you things is the product”. And the product is juicy!

Companies make truly vast sums of money off of monetizing your presence, simply by showing you advertisements. The digital ad market is valued at more than $600B!

Meta platforms (i.e. Facebook) most recent quarter reported north of $50B in advertising revenue for the quarter. Some quick back of the napkin calculations - at ~3 billion users that’s about $16 per user per quarter, or more than $5 per user per month.

And while advertising may be one of the oldest most established paradigms for monetization, it’s certainly not the only one!

Increasingly there’s both direct and indirect monetization value to the data I can acquire from you:

- Say I know enough about what shows you watch (ahem Roku) - well I can package that and sell it to people to better target you.

- If I know about your e-commerce purchase behavior, I can sell that to someone to better dynamically price (or introduce incentives/coupons) to trigger more purchase behavior.

- If I know about what type of content you’re browsing, your search history, etc. then I can use it to enhance my product - surface better and more relevant insights - and make you even stickier.

That last point can not be understated. We live in a world where data insights are part and parcel to every interaction and every product. Getting your eyeball, and understanding you is the most effective method to adapting the experience I give you, so that I can keep your eyeball longer, attract it more frequently.

It’s a virtuous cycle to drive affinity, loyalty and product growth (not just monetization). So naturally, the gloves are off when it comes to getting that eyeball!

But we’re going to detour quickly before we get to the war at hand.

When you lose the eyeball?

I woke up recently to a fascinating article about Apple striking a deal in China (article in comments).

In China, Tencent’s “WeChat is the only app opened by a typical Chinese iPhone user.”

Surveys have found that 95% of Chinese users would rather switch devices than give up WeChat.

While we might generally think that Apple owns the eyeballs well in its device ecosystem, Tencent has managed to move a step closer than the screen - the WeChat super app is its own ecosystem - sending money, booking a doctor’s appointment, getting a taxi… and on and on.

Epic Games has been in a legal crusade against Apple’s commission structure - but in China, Apple had already lost - and taking a deal for only 15% of the WeChat commissions is winning the next battle in a war for eyeballs.

And in as much as this is a fascinating story about leverage and Apple cutting a deal - the real nugget of insight is in the survey result quoted. Apple users - people who truly love Apple, would ditch their, let’s be honest premium quality and premium priced, device because they have an even greater affinity for the ecosystem that owns their eyeballs.

WeChat won the war for eyeballs - and in the process, stole loyalty away from Apple users.

Loyalty isn’t just about the money - it’s about the many possible futures you can take.

The war is on!

As I said the gloves are off. In many domains they’ve been off for a while. We’ve spent decades in the war for search engine eyeballs - to the point I’m pretty sure we’re all kind of tired of reading news stories about Google getting sued for its search dominance and practices.

I’d couch one of the greatest war for eyeballs in the last decade was between Uber and Lyft. For them, the eyeballs weren’t you and I as consumers (although there was and clearly persists a war there!), but rather the war for drivers (the supply side of their ecosystem).

It started as a plethora of carrots.

- Driver incentives to sign on and complete rides.

- Car leases

- Insurance, etc.

It got complex - keeping the driver eyeballs by making them so busy they didn’t have time to do enough driving for the competitor. At times it got mean…

And now, one could say that as the market has matured, the players have perhaps realized that their war for driver eyeballs isn’t what’s going to define their future. It rarely seems that Uber and Lyft are supply constrained anymore… so it would seem that their war for eyeballs has shifted towards demand-side.

Here’s a current event (and I really love this one): Cameo vs OpenAI.

This one’s being fought under the guise of trademark infringement, but let’s be clear, it’s not about that. Cameo is a service where you could hire celebrities to “send a message”. I’ve never tried, but I’ve watched a lot of videos people post. OpenAI’s Sora can generate that same video - without the celebrity actually having to make the video.

Why pay for a Cameo, when Sora can do it for free? And with Sora you have fine grain control over the output.

The war for the eyeball - for a user going to Cameo to try to get a video of Jeff Bridges as the Big Lebowski wishing your buddy good luck at the bowling championship (ya, I made all that up) vs. actually getting Sora to generate it - well it is existential to the survival of Cameo as a business.

And in some cases, the war isn’t really for what’s now, but for redefining what comes next.

Amazon has filed a lawsuit against Perplexity for its Comet AI browser making e-commerce purchases on behalf of users.

One of the themes of this year, in the march of AI progress, has been “agentic commerce” - and it seems kind of obvious that a hero type of use case is exactly what Perplexity is trying to do. But I understand Amazon’s perspective too - core to their experience isn’t the single item purchase, but having you within their ecosystem (owning your eyeballs) so that they can cross-sell you, optimize the purchase journey - on and on. Much like in my opening example, owning the eyeball in e-commerce amounts to near full control of the ability to steer the user’s monetizable behavior - something that’s clearly incredibly powerful. And I, for one, believe we’re only seeing the opening shots across the bow of the next war for eyeballs in the digital commerce space.

What does the future hold?

I believe we’re entering a new era - something I like to call Ecosystem Centric Design.

The next wave of companies won’t just be defining how their products are able to deliver value to their target customers, but about how they’re able to create an ecosystem-centric experience that holds the user’s eyeballs and makes their ecosystem a central focal point for that user’s life.

The building blocks of that future are already playing out.

In the AI space we see several Ecosystem Centric Design trends.

Anthropic really kicked us off here with the introduction of the MCP paradigm. While the MCP paradigm (model context protocol), is in the truest sense a way to create an open-ecosystem and facilitate interconnection, it places the LLM as the ring-leader at the center of the interactions with those third parties solutions exposing their capabilities via MCP. Seems a bit self-serving in the end?

We’ve seen OpenAI take a similar, albeit more closed ecosystem approach. Apps in ChatGPT integrated via the Apps SDK, makes ChatGPT the home for initiating / launching / controlling other third party experiences. The pivot from MCP? Building into ChatGPT via the Apps SDK (which let’s face it, what company doesn’t want to try to latch onto the ChatGPT user base) doesn’t mean you’ve done the work to let Meta AI, or Gemini, or Claude’s users have the same access. OpenAI has leveraged its market position to go the closed ecosystem route, as a means of pursuing their Ecosystem Centric Design.

And clearly they’re doubling down on that approach and going further. Speaking personally here, my phone’s home screen has shortcut widgets to two different LLMs. Access is as easy as pull it out, tap the screen, and start engaging (via typing or voice). There really isn’t any friction - and in candor as a visual learner, I like reading the output. So what exactly do people need a purpose built OpenAI device to do the same? The answer’s rather obvious at this point (at least I hope so, otherwise I’ve clearly done a poor job with this article). It’s Ecosystem Centric Design. That purpose-built device keeps you (your eyeballs) locked in the OpenAI ecosystem, rather than giving you flexibility to engage with other solutions.

And I won’t go into the AI browser bit - mostly because I’ve already mentioned it and I think I’d be a bit pedantic in doing so again! Nevertheless, AI certainly isn’t the only domain in which we see a rapid move towards a strategy around Ecosystem Centric Design.

Getting (physically) closer to my eyeballs

We’ve been experiencing this battle in next gen immersive compute for a while - by this I am of course referring to Spatial Compute or less marketing-y VR headsets. On the one side we see the Apple ecosystem - building upon its strong closed ecosystem DNA and strategy to focus on premium experience lever as a way to trade off on flexibility.

Next to it we have, what I would term, a semi-open ecosystem approach, the likes of which we see from companies such as Meta with their Quest or HTC VIVE. Curated and controlled, as we see with a closed ecosystem, but with early signs of more open approaches, and the ability for advanced users to “side-load”, thereby (in theory) allowing any open options.

And now we see Google and Samsung Electronics emerging as a new entrant - although we have others in a similar space such as Valve corporation - leaning more towards the open ecosystem approach for headsets.

But the common thread in all of these - whether closed, semi-open or open ecosystem - is that they are all making intentional strategic choices about the Ecosystem Centric Design and how to leverage that approach as a way to continue to own the eyeballs.

The next frontier of interface

And finally we get to the next frontier of interface - the one I believe will truly be the sticky (at maturity) for Ecosystem Centric Design. This sets apart from every other example I’ve given for a simple reason - they’ve all been a digital interface. A “pane of glass” overlaid on top of the real world - with input levers for interacting with it. But as we move towards augmented reality devices, we start to see a paradigm of something that can blend the digital world / compute / superpowers with our IRL interaction in real time. The value in doing so, inherently gives control to the device. It owns what my eyeballs see.

I look forward to the day where I put on the F1 race on TV, and in real time as I’m watching a nail biting moment on screen, my AR glasses show me an alternate view from Lewis Hamilton’s PoV as he makes the move to overtake Verstappen.

Now that’s a view on owning my eyeballs that would be truly delightful!