I’ll say it - I got really excited when we started calling it the Metaverse.

Heck, I was jazzed when Meta added legs to avatars (as if the concept of an avatar wasn’t weird enough already!)

The world has come such a long way from the early VR concepts, like the ones in my memory.

We are at the crux of bridging a divide between real and digital worlds - truly blending our reality to make digital compute and digital intelligence overlay a superpower anyone can have.

But it’s not there yet.

This article is catalyzed by the Meta Ray-Ban Display Glasses. Put them on and you can literally feel the future on your face - but that future is still viewed at a distance. And crossing that gap, I believe, isn’t just about the technology to enable it - because as is apparent, we have so much amazing technology at our disposal already. It’s about the product direction and user experience.

So that’s what I’ve focused on here: what are the killer things that leapfrog us across that bridge, and create the blended experience TODAY that’s not a nice-to-have, but a must-have superpower.

A Basis for Comparison

Before we unpack the future for smart glasses, I thought we ought to focus ever so briefly on three other products that do different types of amazing jobs enhancing our digital immersion and reshaping how we experience reality.

The most accepted immersive device first - Apple’s AirPods (pro or not!)

Put them in, and you’re in another world - defined around your auditory sensory perception. They started with noise cancellation - truly “yesterday’s” tech. But as they’ve added in spatial audio, adaptive profiles based on your personalized hearing, and transparency modes, Apple has flexed on the user experience - making AirPods immersion comfortable.

These are without a doubt the most commonplace immersion devices - you can walk around all day with a digital device blocking your ear canals, and yet function better than normal.

I’d argue that in delivering this, Apple has created a gold standard for what immersive digital experience ought to be.

And make no mistakes, adding health sensors, and language translation capabilities sets a new high water mark, focused on the experience enhancements - the superpowers you truly have with a pair of AirPods in your ears.

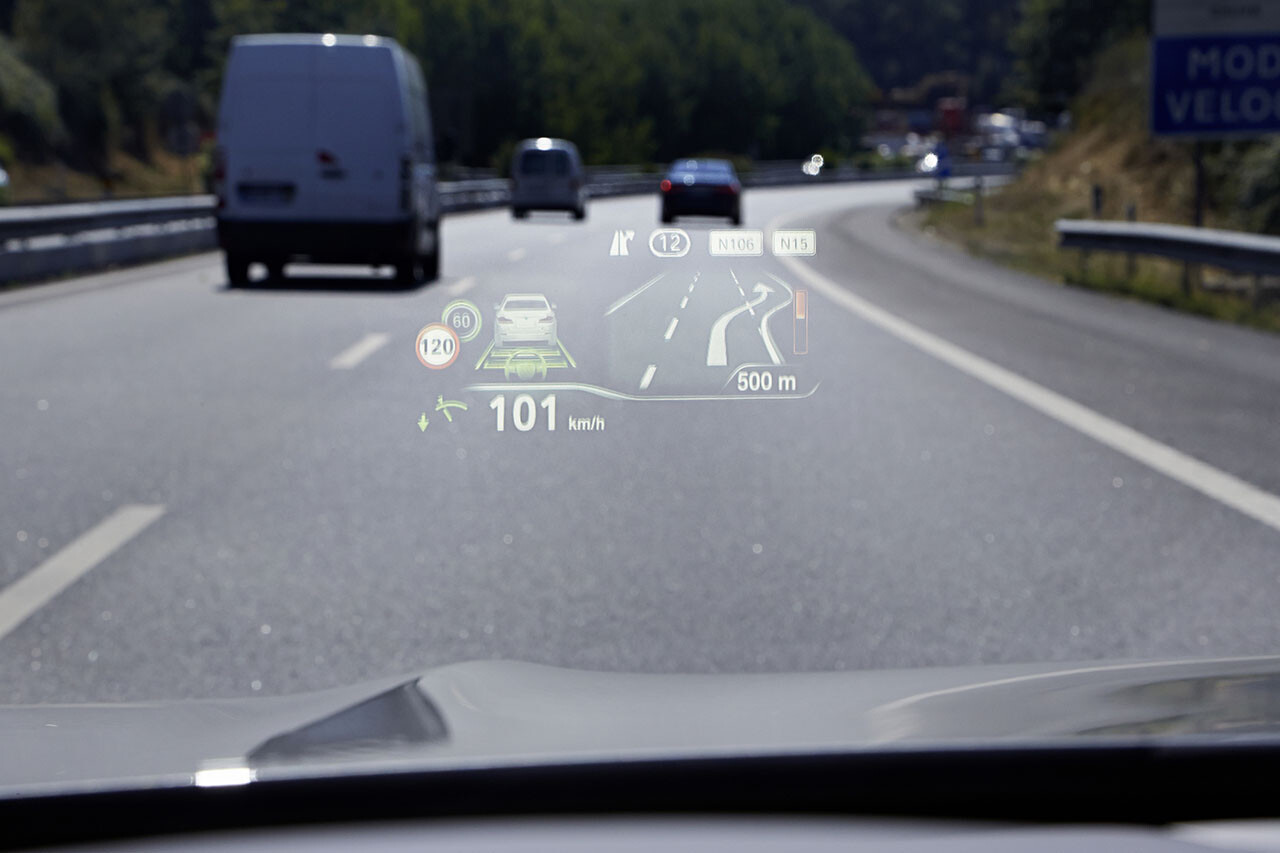

Next we move to perhaps the most seamless of immersive experiences - the BMW Heads-Up Display

And yes there are others, but I’d argue that BMW is far and away one of the best.

It’s present, available, but never intrusive. Only in my field of vision should I so choose.

- Helpful? Absolutely.

- Delightful? Beautifully so.

- Necessary? Nope.

Will I look for a BMW-quality HUD in any car I ever buy in the future? YES. YES. YES.

Without a doubt, the HUD is perhaps the closest analogue to an early smart glasses product - with so many learnings that can be shared. But smart glasses ought to be more than just displays - they are interactivity tools - and that’s not what a HUD aims to be.

Our last basis for comparative learning, OBVIOUSLY, has to be the Apple Vision Pro.

I put it on and fell in love. I was transported quite literally to a different plane of existence. This is the type of immersion I believe so many of us technology geeks dreamt of when we first put on a VR headset. And while it had immersion, it totally lacked the other dimension of blending reality… the actual reality part. You are in your digital world, isolated, a noticeable headset ever present. But Apple excelled at rethinking the UI and UX - the interactivity with the digital device.

And now for the Meta Ray-Ban Display - v1

I’ll say it again, it’s a platform. And one I’d bet on for the future.

Certainly polished and production ready, but still on the wrong side of the chasm for blended reality. Follow along as we explore several dimensions of how Meta could advance that platform today to take major steps to blend a user’s reality:

- Input

- Frictionless Interactivity

- Digital Blending

- Killer Use Cases

The Future of Input

The Meta Ray-Ban Display has two input modalities - both presenting as double-edged swords.

Voice: Just ask Meta AI to do something… and it’s fairly likely to actually do it! We should marvel at living in the age of LLMs that are able to handle our poorly defined instructions and turn them into the outputs we desire. And I truly have no bones to pick with the concept of a voice interface, nor Meta AI. It works!

It just suffers two problems: (i) it’s not private, and (ii) I don’t want to use it - and I’m not sure which is the bigger issue!

The “it’s not private” issue is well known, and despite interesting solutions in the past (such as sub-lingual vocalization, which is immensely cool and awkward in its own right), there’s really not much that can be done! We know people want privacy for their audio. Heck, even in the demo it was clearly stated as I listened to music loudly that the other party couldn’t hear it. Yet when I say to my Meta Ray-Bans: “help! I need to find a bathroom ASAP, lunch didn’t agree with me”, I’ll be cringing embarrassingly.

Which segues nicely to “I don’t want to use it”. And that’s not because of privacy - it’s simply because I’ve never been the person to talk to a device - it’s just not my process. It’s not how I’ve been trained. And there’s a pretty good chance you’re just like me (as evidenced by the fact that you probably didn’t ask Alexa to buy you a new Kindle during Prime Day).

So while voice is absolutely a necessary input modality, it’s clearly not a silver bullet.

The Neural Band: This was literally 50% of the reason I rushed out to try the Meta Ray-Bans. I’ve written previously that I was an early adopter of now-defunct EMG control products, but the cool factor here is off the charts. Detect my muscular inputs and responses? YAAAAS

And it works, impressively so, requiring minimal user training. Perhaps the only “fault” I can find is the need to have different sizes - but that’s nitpicky.

So with two great input media, you’d wonder what the problem is?

And the problem is US!

We are multi-modal creatures, and we’ve been trained and already leverage so many input modalities.

- Let’s say you wear a smart watch - wouldn’t you want to be able to swipe forward/back/up/down on your watch to control your smart glasses?

- Using your smart glasses while on your computer - your hands already occupied on your keyboard and mouse - input media - must you really switch media to interact with your smart glasses?

- Reading an article on your iPhone, when a Messenger notification comes in (and maybe you don’t want everyone on the subway to hear you dictate your response). Seems reasonable you’d want to use your iPhone as the input control.

If smart glasses are to be a persistent layer for us as we navigate the world, then they ought to seamlessly be able to be controlled by all those other input media that we may already have (literally) at our fingertips.

Rethinking the App Paradigm for Frictionless Interactivity

Don your Meta Ray-Ban’s and amongst the first apps you see are:

- Messages

- Messenger

Yes, it’s quite clear that communication is a core use case - and that makes sense. But I find myself struggling with the OS - or more specifically, the notion of the App.

What I wanted to do was to message someone.

But this isn’t my phone or my computer. I want a level of frictionless interactivity that doesn’t require me choosing my messaging app.

- When I message my wife, it’s almost exclusively by WhatsApp.

- When I message my friend Alex, it’s always on Messenger.

- My parents are SMS people.

And I bet if you go through your top contacts, you’d be able to find patterns much like my own.

Smart glasses are by their nature and design a much lighter-touch interface than a phone or a computer. So shouldn’t our interaction paradigm follow similarly?

A frictionless paradigm would be understanding my intent to communicate → identifying the recipient → crafting the content → and only (optionally) asking which medium… if it wasn’t totally obvious that telling my wife I managed to snag her Halloween edition totes at Trader Joe’s is a WhatsApp message.

Mindlessly scrolling “reels”? (I’m pretty sure this is in the “future feature” category). I trust Meta to blend Facebook Reels with Instagram Reels, etc., to give me one curated feed.

While this is arguably rather hard, it also happens to be one of the areas where Meta is uniquely positioned. It has access to so much of my existing historical usage across their different media, and combining that with contextual awareness (from the device itself and, presumably, some Meta AI magic) - it can be made so.

And while I’ve left “killer use cases” for later, I’ll state clearly right now - that this type of frictionless interactivity is a killer UI adoption paradigm. If I’d put those glasses on and there was one messaging app to rule them all… well, my bank account would be just a little bit lighter today.

Digital Blending - Not Just HUD but Indication

So BMW has another killer, albeit quirky, feature - and this one isn’t in the heads-up display.

It’s called “Augmented View”.

It works like this:

You’re approaching an intersection where the navigation says turn left. A camera (on the front of the car) shows a real-world feed (mirroring what you see out your windshield) and the car overlays a GIANT digital turn indicator.

The indicator resizes appropriately based on your distance to the intersection. Small at first, quite large as you get to the intersection.

Necessary? Honestly not in any way for the driving experience - but an ideal demonstration of digital blending.

And exactly what you expect from smart glasses!

When the smart glasses were captioning a speaker (again this was super cool), there was no visual indication of whom the speaker was - no arrow displayed on screen pointing to their face, no object identification box shown surrounding their head.

Here was a blended reality experience - done well - except missing the visual element that blends reality.

In candor, doing this is a different type of series of hard problems, from object tracking, identification, image overlay, dynamically adjusting the overlay, handling edge cases (like what happens when only part of the “object” aligns to the displayable area), etc. Not to mention that this is the type of compute and use case that would absolutely BURN through the battery life.

But still… I WANT IT!

And finally, the killer use case!

Wait… what is the killer use case?

A content creator needing to capture a video? Well, the Ray-Ban Meta glasses (those are the ones without the display) excel at that.

Take a phone call? Listen to music? We’ve got a host of solutions.

Point a camera at some ingredients on your counter and ask for a recipe? I haven’t tried it, but I’m told there’s an app for that (on my phone).

Captioning / subtitling someone - absolutely a killer use case. Going back to some of my previous points - those are the types of use cases that blend reality, combining a visual medium, and an audio medium - where a visual output (i.e. on display) is a unique capability not previously achievable.

And while I personally have many more killer use cases in mind (I charge for those by the way!), I hope that the takeaway is clear - we need more!

So why release these now?

Putting on the Meta Ray-Bans, it’s clear that they are the products of many years of iterations, and the start of a platform that Meta will continue to expand. And despite all the shortcomings, they are absolutely usable.

Could Meta have continued to toil away until perfection? Sure! But I have a hard time believing that’s the right strategic approach.

So many of the killer use cases will be defined by people like you and me, experimenting, trying to do something new with this blended technology enabler, that Meta may never have considered. So much of defining what a target blended reality interface should be will likely come out of the fruit of debate, commentary, and criticism.

Rather than toil on the technology, we’ve been given a platform to take our dreaming of augmented reality to the next level - and I for one love that!

But my biggest hope for the interface, and the future, is that Meta embraces the implied role they have at the center of so much of my social and content life, and the explicit permission I give them in donning their smart glasses as one of my compute platforms of the future, and redefines what my hybrid experience with reality could be, leveraging all that context and knowledge of me, to remove the friction in my reality.

Leveraging technology to remove the friction in our lives. Isn’t that actually the rally cry for what mixed reality ought to be?