What do we do to learn something new? We look it up!

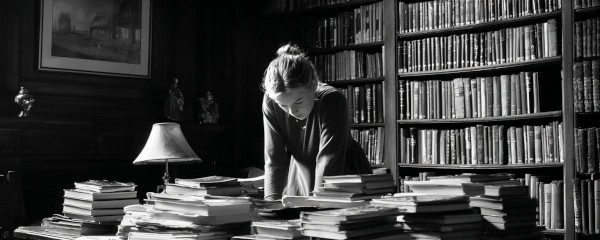

When I was in high school (this was a while ago), I remember having to write a research report. Like an expert sleuth with the deductive skills of Hercule Poirot, I structured a query, plugged it into Google (okay, it was Yahoo!), synthesized the results, and refined my search… again and again.

The process wasn’t simply about learning the answer - it was about learning the surrounding space. Triangulating, if you will. All those paths not traveled, the linked pages I read and bounced off of, became knowledge I gained along the way.

Fast forward to today.

As my child watches Sesame Street (yup, I’m that parent) and learns about “looking things up,” I’m wondering: will LLMs and agentic browsing rob him of the knowledge he could gain from exploring those paths not traveled?

Ask a question → get the answer

Direct. No meandering. No alternatives.

But without context, how do you know if it’s right?

- How do you know your LLM hasn’t just hallucinated some data to make you happy?

- How do you know there isn’t more to learn or additional paths to probe?

- How do you triangulate the space when the whole value proposition is about not having to see any of the surrounding space?

Research in cognitive development shows that the process of active information seeking - what psychologists call epistemic curiosity - is crucial for deep learning and critical thinking skills.

When we eliminate the journey, we might be eliminating the learning.

What a thoughtful AI might say instead

I’m pro the march of progress and the efficiency that LLMs and agentic browsing are bringing. But perhaps the solution will evolve at maturity to help us go further. Can you imagine your AI saying:

- “Alternative viewpoints available - would you like to see?”

- “There is conflicting evidence - I’ve shown you the most prominent viewpoint.”

- “This is a fascinating subject - would you like me to help you go deeper?”

These are just a few approaches to enhance AI products in non-obvious ways that could add immense value to users while preserving the learning process.

A moral imperative, not a feature request

But I’ll close with my philosophical thinking underlying all of this:

If we can agree that LLMs and agentic browsing will lead to the next generation being more superficially aware, then shouldn’t there be a moral imperative to help people maintain some of that pursuit of knowledge they’re losing?