We speak about a great many things where AI is superior to humans. But for some reason, it feels like science experiments are something we hold dear for having human superiority - believing that there’s an inherent creativity in forming a hypothesis, designing a science experiment to hypothesis test, and proceeding forward.

I’ve been a believer in this thinking for a while - proven by a recent example.

My AI coding assistant (this time it was Gemini, but I spread my love) was helping me work on a web animation. And it got it horribly wrong. No matter what I prompted, it seemed to have hit a wall - the “thinking” showed an analysis pipeline… and there was rigor there. But it was totally, painfully (and very frustratingly) stuck - it kept regenerating the same code, telling me it had fixed something, when in reality it had changed NOT A SINGLE CHARACTER!

The human in the room (that’s me) did some experiments - commenting out different bits of code, seeing the results, and logging statements. Until I finally found something that behaved weird.

When I asked Gemini the why question - why when this code is removed, the behavior is different - a (virtual?) lightbulb went off for it.

Did I have human ingenuity? Or did I just have an aptitude around experimenting and experimentation design that AI lacks?

At least this was my thinking until I came across this article.

I began searching out other examples, and certainly this is not alone. There are published cases of the achievements of AI in experiment design. But I wonder, is this confirmation bias? Do we laud the achievements of AI in this domain of experiment design because they are so few, and not even bother talking about our null hypothesis when AI fails?

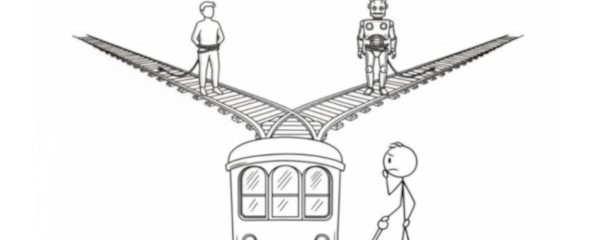

So I thought, let’s be scientific about this (see what I did) and break down some key principles to understand what and how AI can make beneficial/superior experiment design.

Hypothesis Testing and Goal Seeking

What happens when we seek to test a hypothesis? There’s a goal in mind and more often than not some historical design on how to run the experiment or something similar, that we know has worked.

Human approach: We typically start with incremental improvements to existing designs. We ask “how can we make this 2% better?” We break down why something worked as an experiment design, and try to advance it - and in the process demonstrate that we are often constrained by our understanding of what’s worked before.

AI approach: As was evidenced when the Caltech team’s AI tried to produce an improved experimental design, it wasn’t constrained by aesthetic preferences or conventional wisdom. It wasn’t concerned with understanding why a previous experiment had worked - it was purely goal seeking. The AI produced designs that looked like “alien things” with “no sense of symmetry, beauty, anything.”

Winner

AI wins on pure goal optimization, but humans excel at defining meaningful goals and constraints.

Identifying Seemingly Uncorrelated Alternatives

This is where the plot thickens. As one of the lead researchers Rana Adhikari admitted: “If my students had tried to give me this thing, I would have said, ‘No, no, that’s ridiculous.’”

AI approach: The AI found decades-old esoteric theoretical principles from Russian physicists. Things that the best and brightest researchers would never have considered (and certainly not likely put forward) because no one had ever pursued them experimentally!

Human approach: While I hesitate to say that we seek to “please our masters” and are apprehensive of throwing out outlandish ideas, we have cultivated a feedback loop that often diminishes our stature in our professional fields for such approaches. Pitching those seemingly uncorrelated alternatives (as the AI did), is not exactly a stellar career move!

Winner

While humans may win for leveraging conventional wisdom, AI is far superior for its lack of, well, needing career progress, that allows it to surface concepts and approaches humans might look past.

Access to Massive Data Repository and Synthesis

Here’s where AI starts to really flex.

Human approach: We’re limited by our working memory, recent reading, and collaborative networks. Even the most knowledgeable scientists can’t simultaneously consider all the hundreds of thousands of permutations and configurations.

AI approach: The AI discovered experimental designs that borrowed ideas from a completely separate area of study, leveraging the massive processing power to mix and match in a way humans can’t and run thousands of simulations overnight.

Winner

AI by a landslide. The scale of synthesis is simply incomparable.

Creative Thinking and Unbounded Proposals

Of course, we come to the crux of how I’ve titled this piece:

Hallucination is a superpower.

When the team first saw the AI’s solution they must have thought ‘My program has a bug because the solution cannot exist.’

Human approach: We’re constrained by what we believe is possible. Our creativity operates within the bounds of our understanding of physical laws and practical limitations.

AI approach: Hallucinate an approach that may make no “intuitive sense”, without specific concern for reality or rightness.

Winner

This is where AI’s “hallucination” becomes a superpower. It’s willing to propose things that seem impossible.

The Hallucination Advantage

Have we hit on something profound here?

AI is able:

- To goal optimize, especially when told that its job is to design experiments and test.

- To surface and consider unproven datapoints that humans readily ignore or overlook.

- To process massive amounts of data and permutations, identifying in a brute force manner, things impossible for humans to intuit.

- And most importantly, “hallucinate” a solution that literally defies all pre-conceived notions of “rightness” without any specific barrier to proposing the seemingly impossible.

So perhaps, what we call AI “hallucination” - its tendency to confidently generate content that might be factually wrong - could actually be its greatest strength in experimental design.

“It takes a lot to think this far outside of the accepted solution. We really needed the AI.” - Adhikari

Humans are evolutionarily wired to avoid being wrong. Being wrong in the ancient world could get you killed. But in experimental design, being willing to be spectacularly wrong is often the path to breakthrough discoveries.

AI doesn’t have ego. It doesn’t care about looking foolish to its peers. It can design things that look like “alien things or AI things” without any concern for aesthetics or conventional wisdom.

The real superpower isn’t that AI hallucinates - it’s that AI is comfortable being wrong.

And perhaps that’s exactly what experimental design needs: an intelligence willing to propose the impossible, confident enough to suggest the ridiculous, and unconstrained enough to ignore what everyone knows won’t work.

So perhaps… maybe just maybe… we should explore a branch of AI models tuned for experimental design, where hallucination is the feature and not the bug.