Microsoft recently laid off 9,000 employees. An Xbox executive’s solution? “Use Copilot for emotional support and career counseling.”

Reading that headline gave me serious déjà vu.

Not to current AI conversations, but (and I know I’m dating myself) to 1991.

Meet Dr. Sbaitso

Meet Dr. Sbaitso - the original “digital” therapist that came bundled with Sound Blaster cards 30+ years ago.

Dr. Sbaitso’s therapeutic approach was… limited. His most common responses:

- “WHY DO YOU FEEL THAT WAY?”

- “THAT’S NOT MY PROBLEM.”

- “TELL ME MORE ABOUT THAT.”

Still, “talking” with Dr. Sbaitso was an engaging (and often hilarious) experience.

Could Dr. Sbaitso provide therapeutic answers? Well, not to the same extent that Copilot could today. Modern AI can craft nuanced responses, understand context, and even detect emotional cues in text.

But therapy is about more than just answers - so I wonder, is Copilot (ahem, ChatGPT) any better of a therapist than Dr. Sbaitso was?

Eloquence isn’t empathy

GPT models can write beautiful, contextually appropriate responses about grief, loss, and career transitions. They can mimic therapeutic language with impressive sophistication.

But do they actually care about the human on the other side?

We focus obsessively on model performance - accuracy, reasoning, knowledge retention. We have gold standards for measuring everything from language understanding to code generation.

The AI therapy space is exploding with startups: Replika for companionship, Woebot Health for mental health, Wysa for emotional support, Youper for mood tracking. All promising to understand and help heal human emotions.

Promising as these solutions may be, can we actually replace the “human” in helping people deal with human emotions?

The irony of the moment

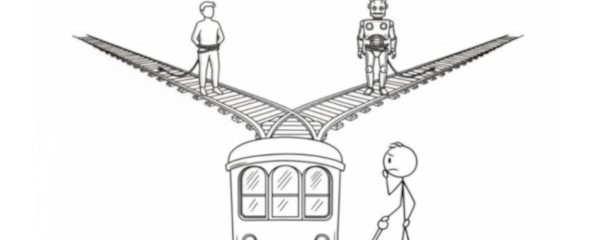

When 9,000 people lose their jobs - at a time of intense vulnerability, financial stress, and emotional turmoil - an executive suggests they turn to the very technology that (let’s be honest here) contributed to their unemployment.

The irony is staggering.

Therapy isn’t just about getting the “right” answers. It’s about being seen, heard, and understood by another conscious being who genuinely cares about your wellbeing. It’s about vulnerability, trust, and the messy, unpredictable process of human growth.

Where’s the empathy benchmark?

If we can benchmark model accuracy, reasoning, and safety - why don’t we have benchmarks for measuring model empathy?

How do we know when AI emotional support is “good enough”? What’s our evaluation framework for artificial care? When someone is in crisis, how do we measure whether they’re getting genuine help or sophisticated pattern matching?

Maybe the answer isn’t better AI empathy. Maybe it’s recognizing that some human experiences shouldn’t be automated away - especially when people are at their most vulnerable.

If you’re thinking through where AI helps and where it harms in human-facing work, I’d love to hear how you’re drawing the line.