It started with a bang! SpaceX acquiring xAI in what’s being called the largest merger in history, a combined valuation of $1.25 trillion. But what really caught my eye was something else in the announcement: Elon Musk says we’ll have data centers orbiting Earth within 2–3 years.

My first instinct? Classic Musk hyperbole (because… well I don’t even need to acknowledge the why!)

But then I started thinking about it differently. Not as science fiction. As a product strategy question.

Here’s what we know: the hyperscalers are throwing ungodly amounts of money at data center infrastructure. Meta just committed $600 billion through 2028. Oracle has a $455 billion backlog. That’s a 359% year-over-year increase. Google, Microsoft, and Amazon are all racing to secure nuclear power agreements for future facilities.

Something doesn’t add up on the ground. And that’s exactly why Musk’s space play might be more than hot air.

So I decided to give this the “light strategic treatment” it deserves. Not an engineering deep-dive. A product manager’s analysis of what needs to be true for space-based data centers to make business sense.

Ground Rules (Before You @ Me)

Let’s establish some guardrails:

- We’re assuming SpaceX and xAI can actually pull off the technical execution. Yes, I know that’s a big assumption. But, for this analysis, we’re giving them the benefit of the doubt on the engineering.

- We’re treating a “space data center” as a product. Not a moonshot dream. A product with customers, economics, competitive dynamics, and a go-to-market strategy.

- I’m using a framework I love for breaking about problems: “what needs to be true”. It’s a tool in my toolkits that I’ve used in approaching product strategy decisions for years. On evaluating what needs to be true, you can determine if it can be transformed into reality (and the likelihood therein).

Let’s dig in.

What Needs to Be True #1: Capacity Must Be Undersupplied

Here’s the fundamental question: is there actually a capacity problem worth solving?

The answer is an emphatic yes.

Oracle’s $455 billion remaining performance obligation isn’t just a number, it represents signed contracts they literally cannot fulfill fast enough. They’ve delayed multiple OpenAI data centers due to material and labor shortages. TD Cowen noted that Oracle was “notably absent” from companies with major long-term US data center roadmaps because they simply can’t secure the financing and capacity to build.

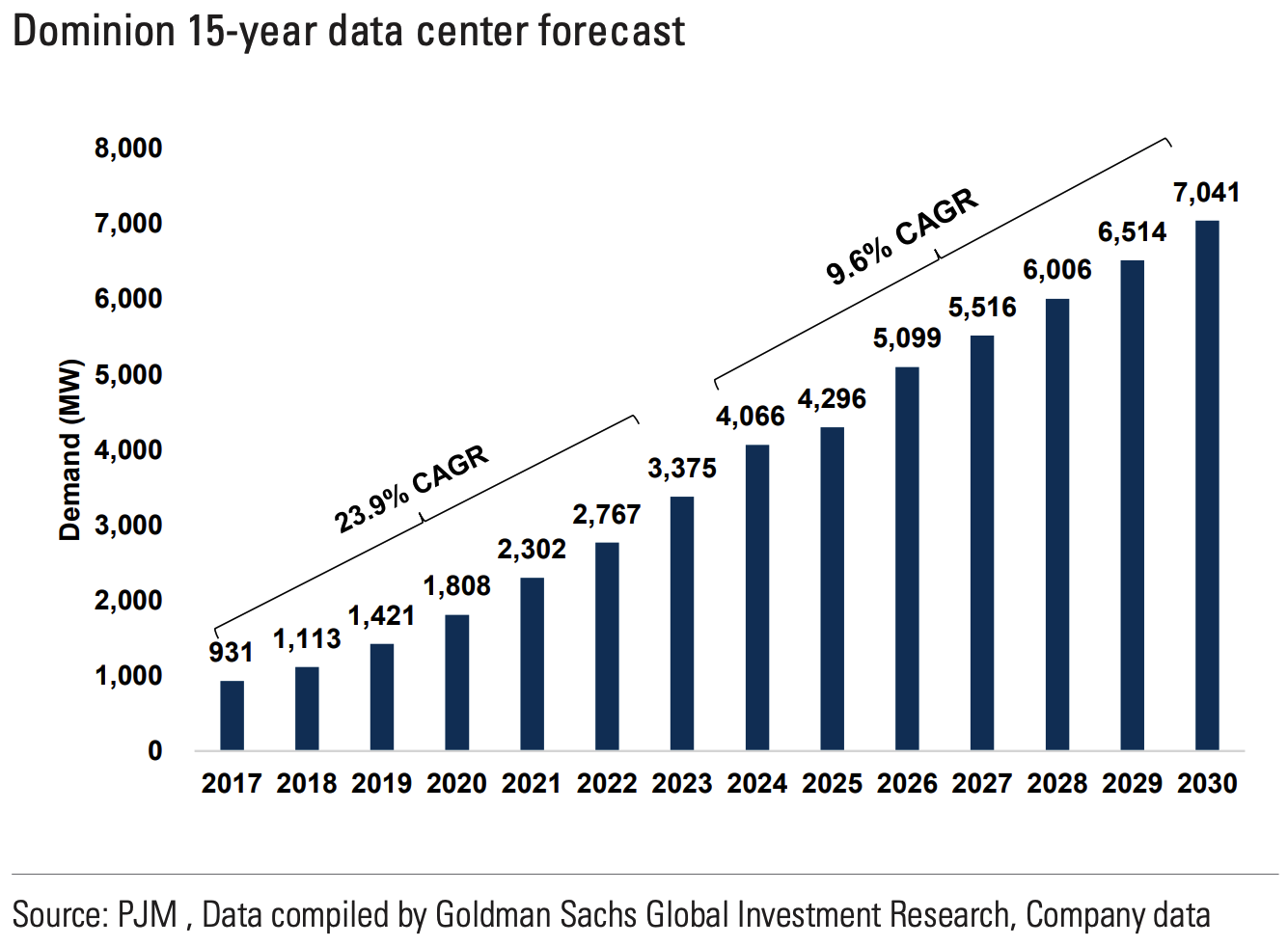

Goldman Sachs projects a 10GW data center shortfall by 2028. The International Energy Agency (IEA) forecasts global data center electricity demand will double to 945 TWh by 2030; equivalent to Japan’s total electricity consumption.

When supply can’t keep up with demand, prices rise. Customers leave money on the table. And importantly, alternatives become viable that wouldn’t make sense in a balanced market.

Think about it from basic economics: if supply exceeded demand, we’d see a price war. Data center operators would be competing on price to fill capacity in a race to the bottom. Instead, we’re seeing the opposite: premium pricing, allocation constraints, and customers like OpenAI scrambling between providers.

Mark Zuckerberg recently announced “Meta Compute” as a distinct organization precisely because compute capacity has become a strategic asset, not just a cost center.

Verdict: The demand signal is real. Compute capacity is struggling to keep pace.

What Needs to Be True #2: Heat Must Be a Solvable Problem

If you’ve never thought about data center cooling (most folks haven’t!!!), let me give you the 30-second version: it’s a massive, expensive nightmare.

Modern AI racks run hot. Really hot.

Traditional air cooling can’t handle it. That’s why the liquid cooling market is projected to grow from $2.84 billion in 2025 to $21.15 billion by 2032, a 33% CAGR.

Companies like Vertiv, Iceotope, Asperitas and Submer are all racing to solve this problem with cooling and immersion systems. But the solutions are expensive, complex, and add another layer of operational overhead.

Here’s what’s interesting about space: it’s cold.

Really cold.

The ambient temperature of space is approximately 2.7 Kelvin (−455°F). Heat dissipation becomes a passive radiative process rather than an active pumping-and-chilling operation.

Think about what this means operationally:

- On Earth: You need chillers, pumps, cooling towers, water treatment, specialized HVAC systems, and a small army of facilities engineers. All of this consumes power. Often 30–40% of a data center’s total energy use goes to cooling.

- In Space: You radiate heat into the void. Period.

The cost savings from eliminating active cooling aren’t marginal. They’re transformational.

Verdict: Space solves the heat problem at its root cause, not the symptom.

What Needs to Be True #3: Power Must Be Available and Sufficient

Now we get to the big question: can you actually power meaningful compute in space?

First, let’s acknowledge the scale of the power problem on Earth:

- US data centers consumed 183 TWh in 2024.

- That’s projected to hit 426 TWh by 2030, a 133% increase.

- BloombergNEF forecasts data center capacity in PJM (the eastern US grid operator) could reach 31GW by 2030, nearly matching all the new generation expected in that region.

The hyperscalers know this is an existential constraint. That’s why:

- Google signed a deal with Kairos for 500MW of small modular nuclear reactors by 2030–2035

- Amazon committed to 5GW of nuclear by 2039

- Meta is building a 2GW facility in Louisiana. It will be one of the largest data centers in the world

In space, solar is abundant. “It’s always sunny in space!” as Musk noted in his merger announcement. But is it enough?

Current Starlink satellites generate power for their communications equipment through dual solar arrays. And while solar power might be sufficient for a communications satellite, there’s going to be a significant delta in power needs for a space data center satellite.

The simple answer is more solar panels, right???

Scaling solar panels in space is theoretically simpler than on Earth. No weather. No night (in certain orbits). No atmospheric absorption. But there are practical limits to how large you can make a satellite’s solar array while maintaining structural integrity and maneuverability.

At some point, you hit a ceiling where the compute you can physically fit in a satellite exceeds the power available from attached solar panels.

The obvious solution? Nuclear.

And if you don’t think Musk has already thought about this, you haven’t been paying attention. The man who runs SpaceX, which has been working on deep space propulsion, is certainly aware that nuclear power enables missions beyond what solar can support.

Prediction (tongue firmly in cheek): Within 5 years, Elon Musk will be the world’s next major nuclear power. You heard it here first.

Verdict: solar works to start, but nuclear is the long-term answer for serious scale.

What Needs to Be True #4: Bandwidth Must Support Data Center Workloads

Data centers process enormous volumes of data. Can you actually move bits fast enough between space-based compute and ground stations?

Traditional satellite communication uses radio frequencies, and for most applications, it’s nowhere near sufficient for data center workloads.

But SpaceX has already solved this problem with Starlink.

Each Starlink satellite contains three optical inter-satellite links (ISLs) operating at up to 200 Gbps. The constellation has over 9,000 space lasers connecting thousands of satellites, moving approximately 42 petabytes per day.

SpaceX is now developing mini lasers for third-party satellites: 25 Gbps at distances up to 4,000 km. They’ve successfully tested these in orbit.

The challenge with satellite internet for consumers is the last mile - getting data from a satellite to millions of distributed devices. That’s fundamentally hard at scale - and so we default to the same wireless radio frequency technology that serves us well for our mobile phones.

But data center workloads don’t need to hit millions of endpoints. They need to communicate with:

- Ground stations (fixed infrastructure with directional antennas)

- Other space-based compute nodes (other satellites)

Both of these are fixed-point communication problems, not broadcast problems. Laser communication is perfectly suited for this.

Imagine a mesh of space data centers communicating via laser links, with high-capacity laser downlinks to ground stations that then connect to traditional fiber infrastructure. The constellation is the backhaul.

Verdict: Laser ISL technology already demonstrated at scale makes bandwidth viable.

What Needs to Be True #5: SpaceX Must Have the Capital to Fund Development

Let’s talk money.

Building anything at scale in space isn’t cheap. Does SpaceX actually have the resources to pursue this vision?

The numbers are more compelling than you might think:

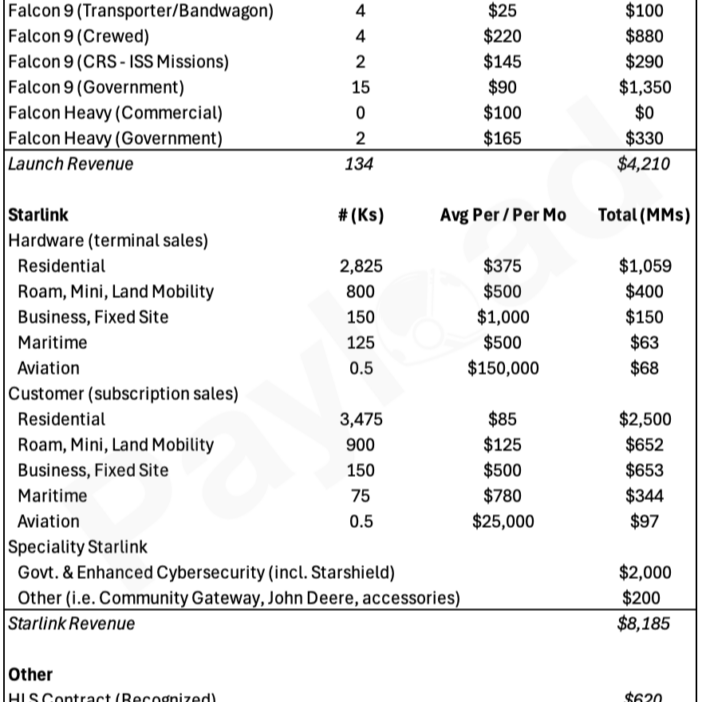

- SpaceX 2025 projected revenue: $15.5 billion

- Starlink revenue: ~$10 billion (representing the majority of SpaceX’s total)

- Starlink subscribers: 9+ million users across 100+ countries

- Starlink profitability: 60–80% gross margins, now cash-flow positive

- 2025 free cash flow: approximately $2 billion

Starlink has transformed from a capital-intensive buildout into SpaceX’s cash machine. The subscription model generates predictable recurring revenue that funds continued expansion and R&D.

Then there’s the launch business itself.

SpaceX operates the only reusable orbital rockets at scale. Falcon 9 costs approximately $60 million per launch, but SpaceX’s costs are far lower due to booster reusability. This creates monopolistic dynamics: SpaceX can undercut competitors while maintaining healthy margins, or raise prices without losing customers because alternatives don’t exist at comparable capability.

What about xAI?

Let’s be honest: xAI is currently burning cash - reportedly around $1 billion per month - competing with OpenAI, Anthropic, and Google. The merger arguably gives xAI access to SpaceX’s cash flows while giving SpaceX a customer with insatiable compute demand.

But for the space data center thesis, xAI revenue isn’t the point. SpaceX’s operational cash generation is what funds the buildout.

Verdict: SpaceX has the financial engine to self-fund meaningful R&D and deployment.

What Needs to Be True #6: The Know-How Must Exist

This is where the SpaceX-xAI merger becomes strategically elegant.

Building a data center in space requires expertise in:

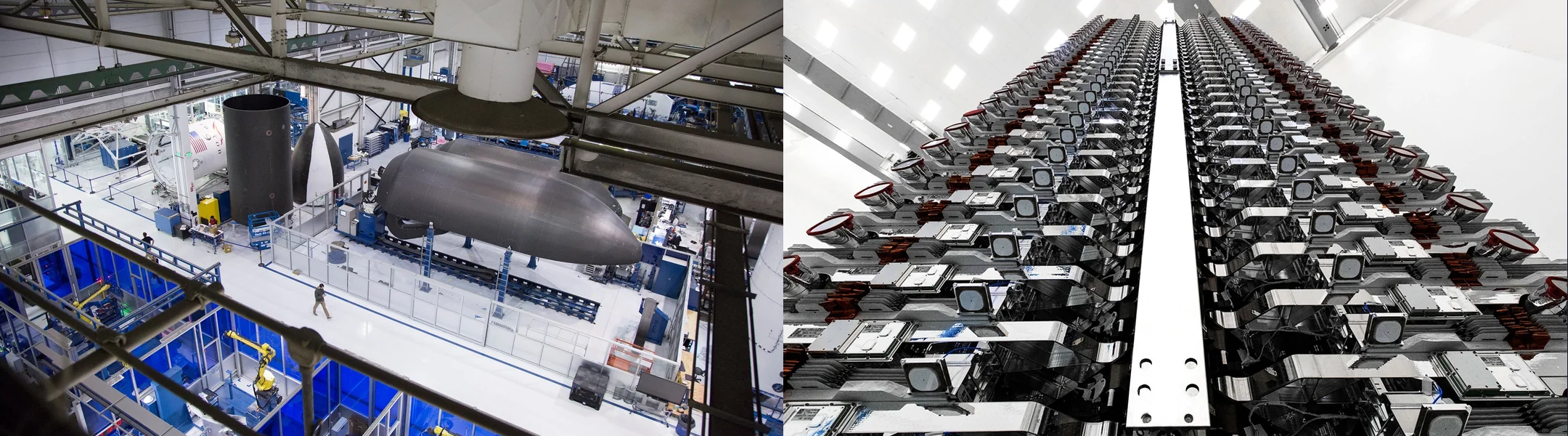

- Satellite design and manufacturing (SpaceX: thousands of satellites deployed)

- Launch operations (SpaceX: monopoly-level capability)

- Orbital mechanics and constellation management (SpaceX: Starlink)

- Space-grade communications (SpaceX: laser ISL technology)

- Data center architecture (xAI: Memphis supercomputer facilities)

- AI workload optimization (xAI: Grok development)

No other company on Earth has this complete stack.

Amazon is the closest competitor, with Kuiper/Leo satellite constellation, AWS infrastructure expertise, and Blue Origin (ok, that’s not exactly Amazon) for launch capability. But Kuiper is years behind Starlink, and Blue Origin lacks SpaceX’s launch cadence.

Google has cloud infrastructure but no satellite play. Microsoft partners with others. Meta is focused on ground-based buildout.

The combination of SpaceX + xAI creates the only vertically integrated player that can conceivably design, build, launch, operate, and consume space-based compute infrastructure.

Verdict: The merged entity has capabilities no competitor can match.

Design Consideration: Longevity and Maintenance

Now for some hard truths about operating hardware in space.

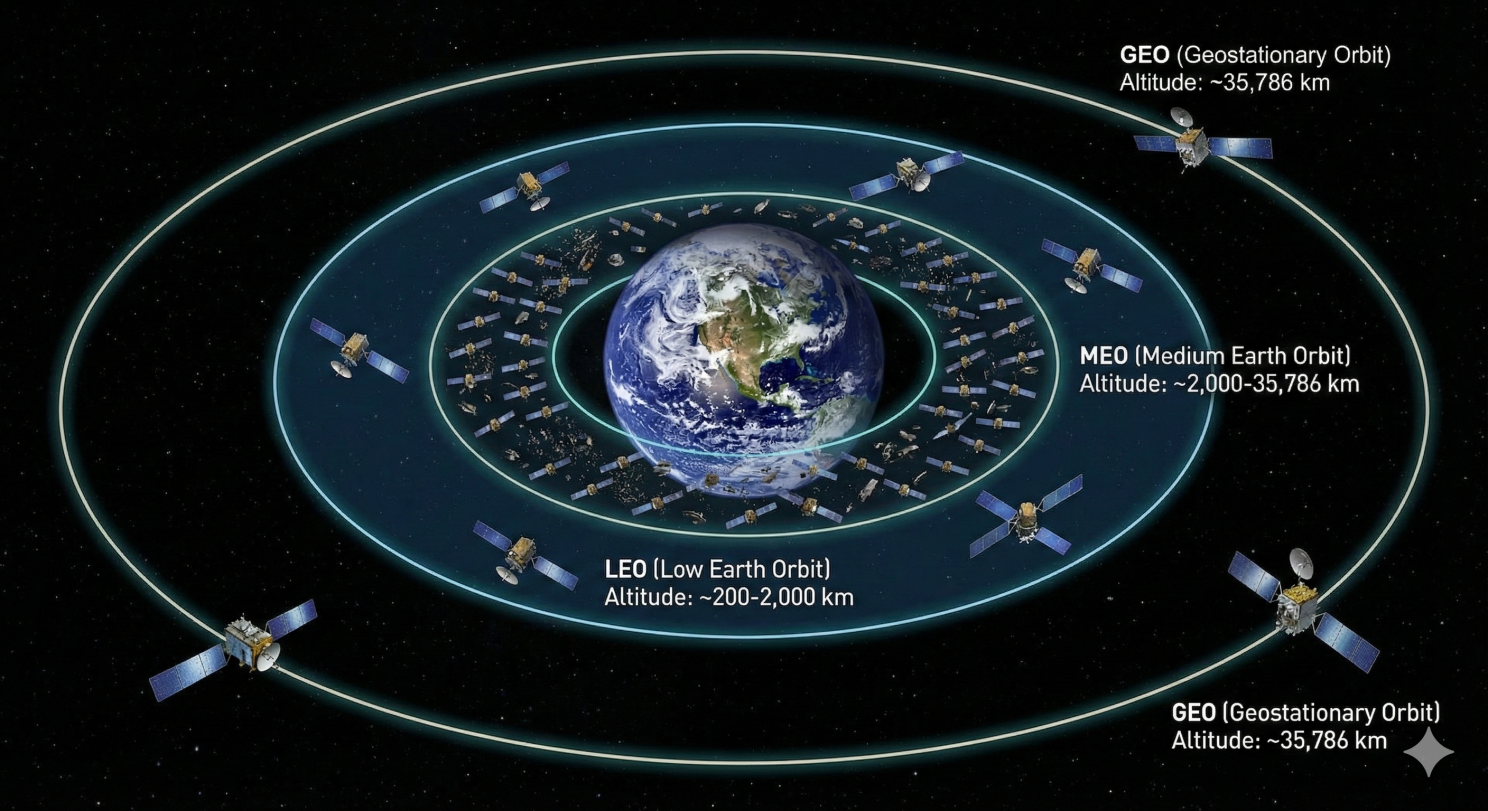

LEO vs. GEO: Starlink operates in Low Earth Orbit (LEO), which offers benefits like lower latency and easier deployment. But LEO satellites have shorter lifespans, typically 5–10 years before atmospheric drag causes orbital decay.

For a data center, that’s a problem. Do you really want to replace your entire compute infrastructure every 5 years?

Geosynchronous orbit (GEO) offers longer operational life and stable positioning, but higher launch costs and greater radiation exposure.

The likely answer is GEO or high-MEO (Medium Earth Orbit) for space data centers, accepting higher upfront costs for longer asset life.

The maintenance reality: when the Hubble Space Telescope launched in 1990, scientists discovered its primary mirror had a flaw: a 2-micron error (about 1/50th the width of a human hair) that made images blurry. NASA spent years planning the STS-61 repair mission. In December 1993, astronauts conducted five spacewalks over 11 days to install corrective optics.

It worked. But the point is: space maintenance is incredibly difficult and expensive.

For space data centers, the operating model must assume zero maintenance over the asset’s lifespan.

This leads to two critical design requirements:

- Redundancy is mandatory. You need enough spare capacity that individual component failures don’t impact operations.

- Capacity will decline over time. Some GPUs will fail. Some memory will degrade. The business model must account for a declining capacity curve.

This isn’t how terrestrial data centers work, where a failed component gets hot-swapped by a technician within hours. In space, that failed GPU stays failed.

Verdict: Space data centers require fundamentally different reliability and capacity planning assumptions.

Design Consideration: Radiation Hardening

Space has another challenge that doesn’t exist in your Phoenix data center: radiation.

Earth’s atmosphere protects us from most cosmic radiation and solar particle events. In orbit - especially at GEO altitudes - electronics face continuous bombardment that can cause bit flips, data corruption, and cumulative damage to silicon.

The traditional solution is radiation-hardened chips. These exist: the rad-hard chip market was approximately $1.5 billion in 2023, but there’s a problem.

Radiation-hardened processors typically lag commercial technology by 10+ years. They’re specialized, low-volume products that cost dramatically more than commercial equivalents; often $2,000+ for components that cost $2 in consumer devices.

You cannot build a competitive AI data center using decade-old chip technology.

The entire point of space data centers is to run cutting-edge AI workloads, which require cutting-edge GPUs and accelerators.

The likely approach: commercial off-the-shelf (COTS) components with system-level hardening:

- Error-correcting memory (ECC)

- Redundant compute paths

- Software-based error detection and correction

- Shielding at the enclosure level

- Acceptance of some degradation over time

This is how modern LEO constellations already operate. SpaceX doesn’t use expensive rad-hard chips in Starlink satellites, they use commercial components with system-level fault tolerance.

Verdict: COTS + redundancy + error correction beats trying to radiation-harden the latest GPUs.

The GTM Question: Who Actually Buys This?

And now we get back to where we started: how do you actually monetize this?

Here’s the beautiful part of the SpaceX-xAI merger:

Musk doesn’t need to sell compute to anyone.

Think about the internal demand:

- xAI/Grok: Competing with ChatGPT and Claude requires massive training and inference compute

- X (Twitter): Real-time content moderation, recommendations, ad targeting

- Starlink: Network optimization, traffic management

Musk’s companies have virtually unlimited AI compute demand. Any space-based capacity SpaceX builds will be consumed internally for years… probably forever at the rate AI workloads are growing.

At the point where internal demand is satisfied (if ever), SpaceX has options:

- Sell excess capacity to Tesla at rates below market (but above marginal cost)

- Offer premium “space compute” positioning. Some customers will pay for differentiation

- Keep capacity offline as strategic reserve

In none of these scenarios does SpaceX need to win external customers to make the product financially viable.

Verdict: The go-to-market is internal consumption. External sales are upside, not the business case.

So… Is This Actually Going to Happen?

Let me be clear about what I’m not saying:

- I’m not saying space data centers will launch in 2–3 years.

- I’m not saying this will be easy.

- I’m not saying there aren’t massive technical challenges I’ve glossed over.

What I am saying is this:

The strategic logic is sound.

Every “what needs to be true” has a plausible answer:

- Ground-based capacity genuinely cannot keep pace with AI demand.

- The physics of space actually solve real problems (heat dissipation, solar power).

- The required technologies (laser communication, satellite manufacturing, launch economics) are demonstrated at scale.

- The capital exists.

- The expertise exists, uniquely, in the combined SpaceX-xAI entity.

And the go-to-market is essentially captive: Musk’s own companies provide all the demand needed to justify the investment.

While many Musk promises stray into hyperbole, I find myself in the unusual position of thinking space data centers are likely to happen. The timeline is uncertain. The execution will be harder than anyone admits. But the strategic foundation is real.

The Real Question

Here’s what I keep coming back to:

After SpaceX announced this merger, with space data centers as the explicit strategic rationale, did a bunch of strategy executives at Amazon get together and ask each other:

Should we be a fast follower?

Kuiper/Leo + AWS + Blue Origin is the only combination that could conceivably compete. And Amazon has proven they’re willing to make decade-long infrastructure bets.

I don’t know if they’re having that conversation… but I’d bet money they are.